William Stopford

China’s GWM shares more details on V8-powered supercar which could come to Australia

11 Minutes Ago

Let's take a look at some technical aspects of voice-recognition systems, which are becoming more commonplace in today's cars.

Contributor

Contributor

Voice recognition is gaining prominence as a hands-free way to control various parts of a car. However, not all systems are created equal.

Cars are increasingly becoming computerised, and that means the automotive industry is rapidly becoming a fast follower in adopting the latest advances in everything from smartphones to IoT (Internet of Things) devices such as smart speakers.

The ability to use your voice to control various in-car systems isn’t actually a new idea. Early systems included Mercedes-Benz’s Linguatronic, which made its debut in 1996 on the W140 S-Class and had the capacity to understand a grand total of 30 words (!) mostly related to controlling the car phone.

Although no system on the market today has a HAL-9000 level of ability (or personality), there has been significant innovation in the space regardless.

These advancements include everything from being able to control almost all interior functions with voice, to natural language processing where the system has a better ability to understand conversational speech and everyday language.

What are some of the key things to look out for when purchasing a car equipped with a voice recognition system?

Carmakers have broadly adopted three differing approaches to integrating voice recognition systems within their vehicles.

The first, and arguably the most obvious, approach is to build a ‘native’ system. This means that functionality is built into the car’s internal computer systems. Embedding this allows the carmaker to greatly expand the range of interior functions that can be controlled by voice.

For example, the latest systems by Mercedes (MBUX Voice Assistant) and BMW (Intelligent Personal Assistant, as present in models with Operating System 7.0 and 8.0) can not only help the driver set a destination via the sat-nav, but can control a range of other functions including the air-conditioning, sunroof, headlights, or even telling the driver the speed limit.

Deep integration of a native voice recognition system means that functionality could even extend to changing drive modes or altering the settings of a car’s adaptive suspension system in the not too distant future.

Piping through a smartphone-based voice assistant is an alternative approach to having in-car voice recognition, and one that is far easier for the carmaker to adopt.

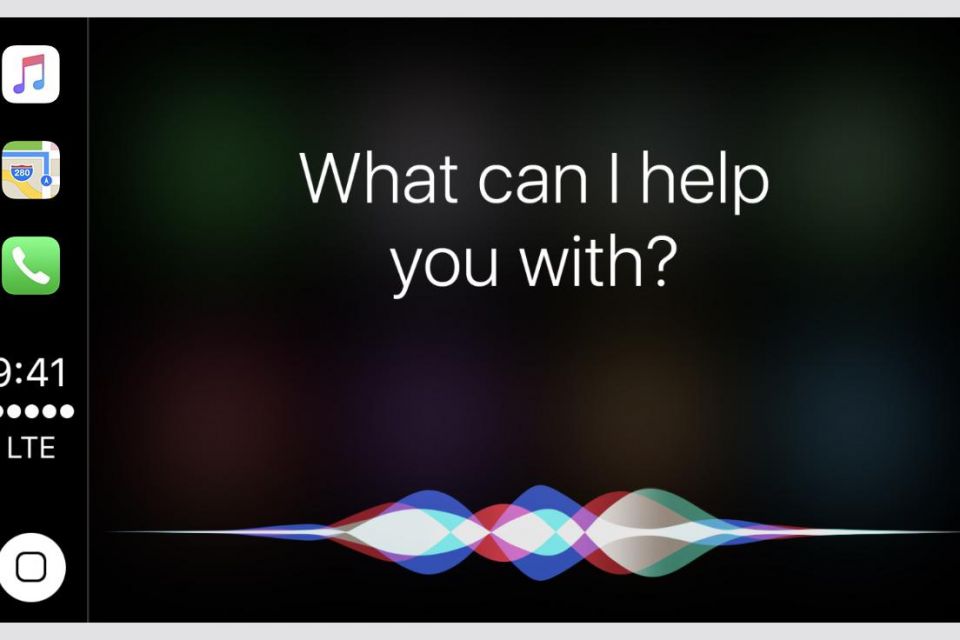

Rather than going to the effort and expense of building and embedding a system within the car’s computers, the same Siri or Google Assistant offered on the driver’s smartphone is made available in the car through Apple CarPlay or Android Auto.

Similarly to how music being played through CarPlay or Android Auto can be controlled using steering wheel mounted buttons, the voice assistant can usually be activated via a button on the steering wheel, or by saying “Hey Siri” or “Ok Google”.

As an example, most Hyundai and Kia vehicles exclusively feature smartphone-based voice assistant functionality. This means that the dedicated voice assistant button on the steering wheel can only activate Siri or Google Assistant when Apple CarPlay/Android Auto is being used. Otherwise, the button doesn’t do anything.

For Apple devices, vehicles that don’t support CarPlay may instead support Siri Eyes Free. This is an earlier, pre-CarPlay integration of Siri with the car, available on certain (typically older) vehicles. For this to function, the iPhone must be connected to the car via Bluetooth. Siri can then be activated via a dedicated voice control button on the steering wheel.

Regardless of whether Siri Eyes Free, Apple CarPlay or Android Auto is being used, the processing of the voice command is done by the smartphone, and functionality is thereby limited to tasks that can be performed on a smartphone.

These systems cannot therefore control features such as the car’s air-conditioning or be used to open the sunroof, lock/unlock the doors etc.

However, by harnessing Apple and Google’s machine learning and natural language processing prowess, a smartphone-based voice assistant may have a superior understanding of what the user is actually trying to say.

MORE: What’s coming next to Apple CarPlay and Android Auto?

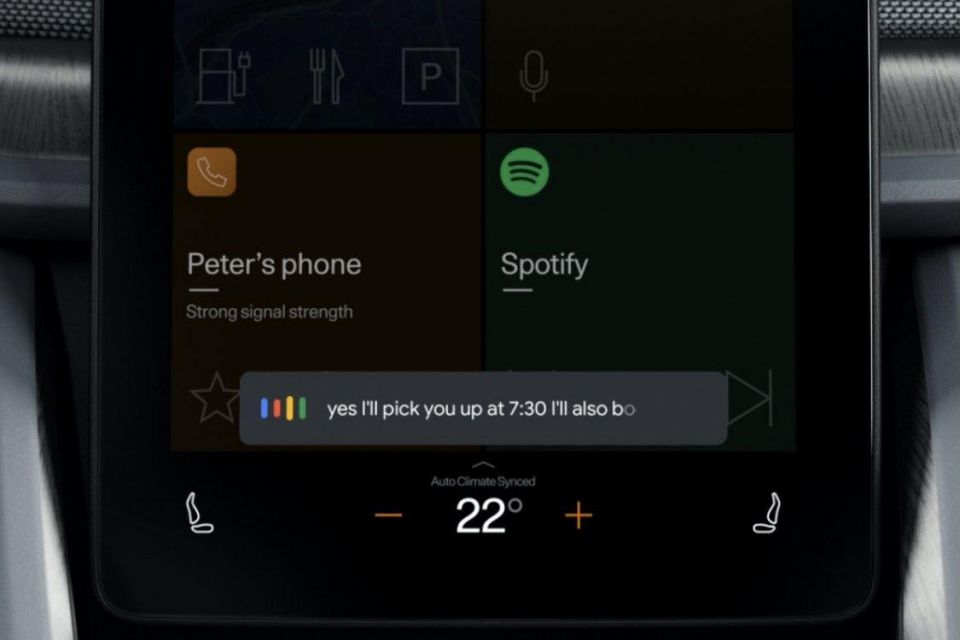

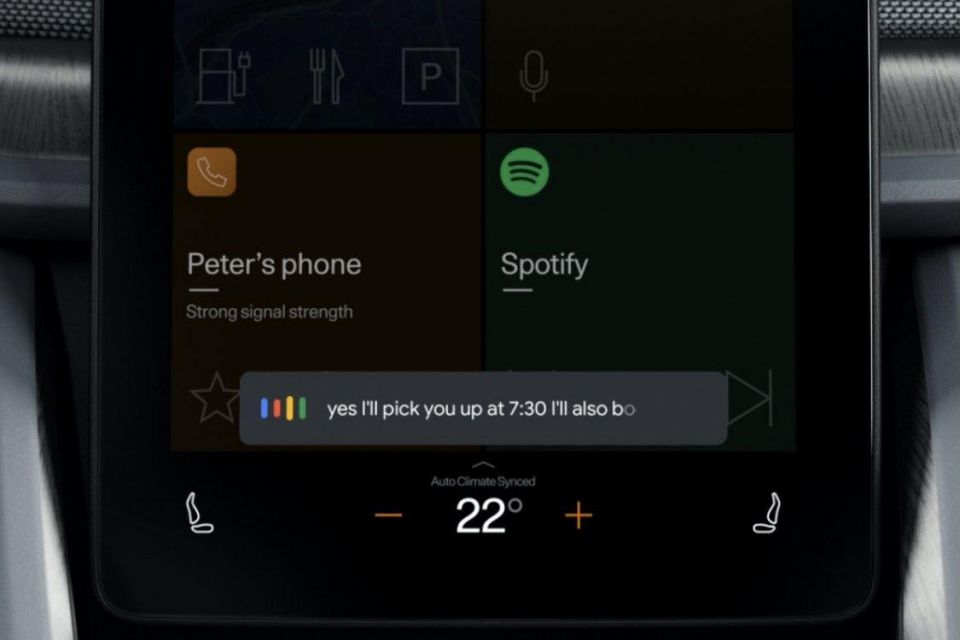

A more recent approach, as evidenced by models such as the Polestar 2 and the upcoming Renault Megane E-Tech Electric, is to embed Google Assistant directly into the car’s computers through Android Automotive. Android Automotive means that Android works directly as the car’s in-built infotainment system rather than as a smartphone-based projection.

In turn the Google Assistant that forms part of Android Automotive software has much greater capacity to function as a voice assistant native to the car. Practically, this implementation is a best of both worlds option, to the extent it can operate systems such as the climate control and perform other car-specific actions.

Another factor to consider when looking at a voice recognition system is how easy it is to use. Early systems, and some still on the market today, rely on preset voice commands.

This could mean the driver and other vehicle occupants are required to memorise a list of commands from the car’s instruction manual, or choose from a small set of words that the car will speak out (or display on the infotainment screen). Such systems may also require the user to speak very slowly and clearly, and may have difficulty recognising accents.

More modern systems feature natural language processing (NLP). This means the system has a far greater vocabulary of words and phrases, and can understand everyday language and conversational speech, including implications.

For example, telling the system “I’m feeling too hot” carries the implication that the cabin should be colder. A voice recognition system with NLP will understand this and thereby turn down the temperature of the car’s A/C system.

The best way to test this out is to simply visit a local dealership. Try a few commands to see what level of comprehension the system has, and whether you are able to speak your mind.

Natural language processing and understanding other advanced voice commands requires powerful computers, often with greater processing power than what’s available in the car or on a smartphone. Internet connectivity is therefore critical to the operation of modern voice recognition systems.

Through online connectivity, these commands can be handed off to an off-site server which has the power to interpret and process the driver’s voice input into specific code. This code is then transmitted as an instruction that tells the in-car system what to do.

This means that in rural areas or other locations where a speedy connection isn’t available, the voice recognition system may have limited to no functionality.

If you’re using CarPlay and your iPhone is running iOS 14 or earlier, for example, Siri functionality is entirely dependent on a strong internet connection. Other systems such as MBUX and BMW OS 7.0 may use a combination of offline and online processing, to the extent that basic commands may work without a connection.

Digital privacy and security is becoming an increasingly important consideration and voice recognition systems, which may send samples of the user’s voice over the internet, are no exception.

The types of privacy and security features used vary widely depending on the specific voice recognition system, and a combination of features may be implemented to prevent malicious actors or hackers from intercepting voice commands.

Typical examples of available privacy and security features include assigning random identifiers to voice requests, so that they can’t be associated with a particular individual.

End-to-end encryption is another common technique. This means that only the car and the off-site server (the intended recipient) can access the voice transmission and corresponding instructions sent, rather than any intermediary or third-party such as an internet or mobile phone service provider.

On the other hand, some systems such as Google Assistant keep a history of the voice requests that a user has made, (depending on settings chosen) so that they can delete at will.

MORE: We have pages of these technical explainer articles, all located here

William Stopford

11 Minutes Ago

Derek Fung

2 Hours Ago

Max Davies

15 Hours Ago

James Wong

16 Hours Ago

Josh Nevett

16 Hours Ago

William Stopford

16 Hours Ago

Add CarExpert as a Preferred Source on Google so your search results prioritise writing by actual experts, not AI.